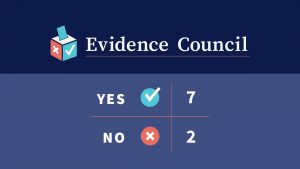

In its first vote-based position, the newly launched Children and Screens Evidence Council voted 7–2 in favor of requiring parental consent for children and teens under 18 to access AI companion platforms – software systems designed to engage users in ongoing, personalized, conversational relationships rather than perform a single task.

Implications for Children, Families, and Policymakers

A strong majority of Children and Screens Evidence Council members agree that parental permission should be required for children and teens under 18 to use AI companion platforms, given growing concerns about safety, development, and mental health. In a recent vote, seven out of nine experts supported requiring parental consent, while two raised concerns about how such policies should be designed.

Why Most Experts Support Parental Permission:

-

- Psychological and emotional risks: Experts pointed to the risk of emotional dependency, distorted social expectations, exposure to sexual or inappropriate content, displacement of sleep and offline relationships, and dangerous advice.

- Developmental vulnerability: Experts emphasized that children may lack the skills to fully evaluate the risks associated with habitual AI companion use.

- Importance of parent-child dialogue: Experts noted that parents need to be aware of what platforms their child may be engaging with, as this step could help stimulate meaningful conversations about desired uses and potential risks.

- Lack of adequate industry safeguards: Several Council members stressed that technology companies have not yet demonstrated sufficient protections for young users, making parental involvement a necessary safeguard.

Concerns Raised by Dissenting Experts

Two dissenting experts cautioned against a blanket parental-permission rule, raising important considerations for policymakers and families:

-

- Restricting access could unintentionally reduce trust, motivating secretive use.

- Marginalized youth who may need private spaces to explore sensitive issues may lose access to potentially supportive tools if policies are too rigid.

- While parental permission might be appropriate for younger youth, matching ages already utilized across other types of platforms would be preferred.

The Takeaway

While requiring parental permission for minors to use AI companions would be a positive step, experts agree that it does not eliminate the need for an approach that encourages shared responsibility between parents, platforms, and policymakers, all of whom have a role in ensuring children are safe online.

Naomi Baron, PhD

American University

“Drastic times call for drastic measures. AI companions are proving not just prevalent but too often dangerous. While not a perfect solution, it’s reasonable to peg independent choice for using them to the same age most youth complete high school and assume greater responsibility for individual decision-making.”

Kelly Brownell, PhD

Duke University

“Media companies have not provided adequate protections for youth. Parental permission is one positive step that should be taken, but should not be used to justify corporate and government inaction.”

Dimitri Christakis, MD, MPH

University of Washington; Children and Screens

“Although there is minimal research on this topic at present there are ample reasons to be concerned about the risks children face using [AI companions]. Parents sign consent for student field trips that likely carry less risk.”

Lauren Hale, PhD

Stony Brook University

“Parents should know what platforms their children are using. The requirement of parental consent will facilitate healthy conversation about motivations, expectations, and limitations.”

Colleen Kraft, MD, MBA, FAAP

University of Southern California School of Medicine; Children’s Hospital Los Angeles

“Parental permission is a safety mechanism, and it stimulates opportunity to talk between parents and kids on the “why” of an AI companion (i.e., is it for CBT, coaching, etc., or is there something that merits an actual friend or therapist?).”

Marc Potenza, MD, PhD

Yale University

“Given a limited understanding of the potential impacts of AI companion platforms on developing youth and reports of suicides among teenagers linked to AI companions, a precautionary principle supports that parental permission be required for minors.”

Paul Weigle, MD

UConn School of Medicine; Hartford Healthcare

“Habitual use of AI companions in teens is commonplace. Companions may cause significant psychological harm in youth: displacement of needed socialization and sleep, dangerous advice, distortion of beliefs about relationships, sexual content, and even AI psychosis. Children and teens cannot weigh these risks against the possible benefits of enjoyment, learning, and virtual support. Minors require parents to make an informed decision about whether their child can safely engage with AI companions.”

Desmond Patton, PhD, MSW

University of Pennsylvania

“A blanket parental-permission rule can replace the conversations parents should be having with kids about AI, trust, privacy, and attachment. Cutting access often drives secrecy, not safety. It can also harm marginalized youth who may need a private first step to process delicate topics (e.g., coming out). I prefer an opt-in model after a parent-child conversation, with strong default safeguards.”

Ellen Wartella, PhD

Northwestern University

“The age is much too old. By 18 most kids are quite independent. Maybe age 12 or 13 which has been more typically used in other media concerns.”

Important Definitions / Glossary

An AI companion is a software system designed to interact with users in an ongoing, conversational, and personalized way rather than perform a discrete task or give one-time answers.

Learn More About the Evidence Council

The Children and Screens Evidence Council is composed of leading researchers and clinicians convened to provide clear, evidence-based guidance on some of the most urgent and debated questions surrounding children, adolescents, and digital media.